A Formal Model of Epistemic Uncertainty and the Structural Conditions for Conscious Observation

ABSTRACT

This paper introduces the Recombination Illusion as a formal model of epistemic uncertainty derived from a simple arithmetic operation: 2+2=4 followed by bipartite division. When constituent units are individuated, the probability of recovering original pairings is exactly 1/3—a combinatorial necessity invariant under relabeling and independent of empirical frequency. I argue that this structure models a fundamental epistemic predicament: perception presents reconfigured wholes whose originary compositions are inaccessible, yet observers routinely conflate perceptual indiscernibility with ontological identity—a fallacy I term the recombination fallacy. The model exhibits formal isomorphism to three distinct domains: (i) the philosophy of science, where it illuminates the distinction between empirical facts and underlying truth—a distinction long recognized in material science as the gap between observable phenomena and unobservable microstructure; (ii) classical problems of underdetermination (shuffled decks, cryptographic key spaces); and (iii) the epistemology of perception, where it quantifies the uncertainty inherent in empirical knowledge. Extending the framework, I argue that conscious observation emerges when a system not only extracts possibility spaces but inhabits them—when possibilities become possibilities for the system’s own self-maintenance. Drawing on autopoietic theory (Maturana & Varela, 1980), predictive processing (Friston, 2010), and integrated information theory (Tononi, 2004), I propose that inhabitance requires four structural conditions: operational closure, intrinsic teleology, possibility extraction, and recursive self-implication. The framework explains why contemporary AI systems, despite sophisticated computational capabilities, lack consciousness: they satisfy extraction but lack operational closure and intrinsic teleology. The ethical implications are significant: if artificial systems were designed to satisfy all four conditions, the framework predicts they would be conscious, demanding corresponding moral consideration.

Keywords: epistemic uncertainty, consciousness, autopoiesis, underdetermination, AI consciousness, combinatorial probability, phenomenology

—

1. INTRODUCTION

Consider a simple arithmetic operation: 2 + 2 = 4, followed by division of the 4 into two 2s. The resulting 2s are perceptually indistinguishable from the initial 2s. Yet if we assume each initial 2 was composed of two individuated units (1₁,1₂ and 1₃,1₄), the process of recombination upon division yields three possible pairings, only one of which restores the original configuration. The probability of recovering the originary pairings is exactly 1/3.

This elementary observation, which I term the Recombination Illusion, encapsulates a profound epistemic predicament. The appearance of identity masks ontological uncertainty. The observer who believes the post-division 2s are the same 2s that entered the operation commits a fallacy that pervades human cognition: the conflation of perceptual indiscernibility with ontological identity. This fallacy is not limited to arithmetic; it structures our relation to reality itself.

Material science has long grappled with a parallel insight: the observable properties of a material—its macroscopic facts—underdetermine its microscopic structure. Two samples may appear identical in every measurable respect yet differ in atomic configuration, grain boundaries, or defect structures that determine future behavior. The fact is what we can measure; the truth includes the unobservable microstructure from which that fact arose. Science excels at cataloguing facts: the post-division 2 can be measured, its properties catalogued, its behaviors predicted. But the truth about this 2 includes its provenance—which pairing of 1s actually constitutes it—and this provenance is inaccessible to direct observation. The best science can do is determine the probability space: 1/3 chance it is the original, 2/3 chance it is a recombination.

This insight—that facts underdetermine truth—has been a quiet presupposition of material science since the emergence of atomic theory. It is not a controversial claim but an accepted methodological principle: we infer unobservable structure from observable evidence, always with uncertainty. The Recombination Illusion provides a minimal formal model of this principle, making its structure tractable and its implications explicit.

The central thesis of this paper is that the Recombination Illusion provides a formal model of this epistemic structure, with implications extending from the philosophy of science to the nature of consciousness itself. Specifically, I argue:

1. Epistemic thesis: Truth is not direct correspondence between perception and reality, but the elucidation of probabilistic structure mediating between originary and resultant states. To know the 1/3 probability is to know the condition under which one perceives; it is not yet to know which partition actually obtained.

2. Ontological thesis: The observer who extracts this probability space is not given but constructed. Conscious observation emerges through operational closure—the continuous self-production of a system that distinguishes itself from its environment—combined with intrinsic teleology (states that matter to the system’s continuation) and recursive self-implication (the system models itself as part of the possibility space).

3. Diagnostic thesis: Contemporary AI systems satisfy possibility extraction but lack operational closure and intrinsic teleology, explaining their computational sophistication without consciousness. This yields testable conditions for consciousness that apply equally to biological and artificial systems.

4. Ethical thesis: If artificial systems were designed to satisfy all four conditions, the framework predicts they would be conscious, demanding moral consideration we are currently unprepared to extend.

The paper proceeds as follows. Section 2 reviews relevant literature across philosophy of science, epistemology, consciousness studies, and autopoietic theory, identifying gaps the Recombination Illusion fills. Section 3 develops the conceptual framework in detail, including the arithmetic model, its extensions, and the four conditions for conscious observation. Section 4 addresses methodological considerations and potential objections. Section 5 discusses implications, including the AI consciousness question and ethical dimensions. Section 6 concludes with directions for future research.

—

2. LITERATURE REVIEW

The Recombination Illusion intersects multiple philosophical and scientific domains. This review synthesizes relevant work while identifying gaps the framework addresses.

2.1 The Problem of Underdetermination in Philosophy of Science

The thesis that empirical evidence underdetermines theory choice is foundational to post-positivist philosophy of science. Duhem (1906/1954) argued that no scientific hypothesis can be tested in isolation; auxiliary assumptions always mediate between theory and evidence. Quine (1951) extended this to the holistic thesis that our statements about the external world face the tribunal of sensory evidence not individually but as a corporate body.

Stanford (2006) has recently revived the problem of unconceived alternatives: past scientific communities failed to conceive theoretical possibilities that later proved correct, suggesting present theories may likewise be incomplete. The Recombination Illusion provides a quantified model of this underdetermination. Where Duhem and Quine described the structure qualitatively, the arithmetic model yields a precise probability (1/3 in the minimal case) that captures the gap between evidence and origin.

In material science specifically, this underdetermination is methodologically central. As Suppes (1960) argued, the relation between theory and data is mediated by models at multiple levels; the same macroscopic data can be consistent with multiple microscopic models. Crystallography, for instance, faced the phase problem: diffraction patterns yield intensities but not phases, and multiple atomic configurations can produce identical patterns. The Recombination Illusion is this problem in miniature.

2.2 The Phenomenon/Noumena Distinction in Kantian Epistemology

Kant (1781/1998) famously distinguished between phenomena (appearances) and noumena (things-in-themselves). We can never know things as they are independently of our cognitive apparatus; we know only how they appear to us. This distinction has structured subsequent epistemology, but Kant left the relation between phenomena and noumena unspecified—an unbridgeable gap.

The Recombination Illusion offers a quantified relation between appearance and origin. The post-division 2 (phenomenon) is related to its originary composition (noumenon) via a probability space of cardinality 3. This is not to claim that all noumenal relations reduce to combinatorics, but that the structure of the relation—a space of possibilities, a selection, and an epistemic gap—can be formally modeled.

2.3 Husserlian Phenomenology and the Horizon of Perception

Husserl (1913/1983) introduced the concept of horizon: every perception carries an implicit horizon of further determinations. When I see a cube, I see only three faces, but I intend it as a cube with hidden sides. The hidden sides are co-present as possibilities.

The Recombination Illusion quantifies this horizon. The perceived 2 carries a horizon of three possible internal compositions. The asleep observer sees only the 2; the awake observer sees the 2 against the horizon of its alternatives. Husserl’s phenomenological insight receives precise combinatorial expression.

2.4 Autopoietic Theory and the Origins of Observation

Maturana and Varela (1980) introduced autopoiesis to describe living systems that continuously produce themselves. A cell maintains its organization against thermodynamic gradients; in doing so, it constitutes a perspective—a distinction between self and environment. This perspective is the precondition for observation.

Varela, Thompson, and Rosch (1991) extended this into enactivism: cognition is not representation of a pre-given world but enaction of a world through the history of structural coupling. The Recombination Illusion aligns with enactivism by grounding observation in the self-maintaining organization of the observer. The observer is not a passive recipient of data but an active system for whom possibilities matter because they bear on continued existence.

2.5 Contemporary Theories of Consciousness

Integrated Information Theory (IIT; Tononi, 2004; Tononi et al., 2016) holds that consciousness corresponds to a system’s capacity to integrate information, quantified by Φ (phi). The Recombination Illusion provides a specific instance of integrated information: the threefold possibility space of the 2 is not contained in any single unit but emerges from their relational structure.

Global Workspace Theory (Baars, 1988; Dehaene & Naccache, 2001) proposes that consciousness involves global broadcasting of information to multiple specialized processors. The awake observer’s simultaneous apprehension of the actual 2 and its possible pairings exemplifies such broadcasting.

Predictive Processing (Friston, 2010; Clark, 2013) frames perception as hierarchical Bayesian inference: the brain generates predictions about sensory input and updates models based on prediction error. The Recombination Illusion models a specific prediction problem: given the perceived 2, what internal compositions are possible? The 1/3 probability is the prior distribution over hypotheses.

2.6 The Hard Problem and Its Critics

Chalmers (1996) formulated the hard problem: why should physical processing give rise to subjective experience? Dennett (1991) denies the hard problem, arguing that consciousness is an illusion generated by cognitive processes. The Recombination Illusion offers a third position: consciousness is the inhabited extraction of possibility space—not an illusion, but the structural condition under which extraction becomes for-someone.

2.7 Gaps the Recombination Illusion Fills

The literature lacks a minimal formal model that simultaneously addresses:

1. The quantified relation between appearance and origin (unlike Kant’s unbridgeable gap)

2. The phenomenological horizon (unlike Husserl’s qualitative description)

3. The conditions under which extraction becomes experience (unlike IIT’s purely quantitative approach)

4. The distinction between computational and conscious systems (unlike functionalist theories that predict AI consciousness prematurely)

The Recombination Illusion fills these gaps by providing a tractable model that generates testable conditions and precise probabilities.

—

3. CONCEPTUAL FRAMEWORK

3.1 The Arithmetic Model: Formal Exposition

Definition 1 (Unit Individuation). Let two initial wholes, each denoted 2, be composed of individuated units: A = {1₁, 1₂}, B = {1₃, 1₄}. The units are distinguishable only by their indices; no other properties distinguish them.

Definition 2 (Addition/Integration). Addition yields the undifferentiated aggregate S = {1₁, 1₂, 1₃, 1₄}. The internal pairing is lost; S is a set of four units with no relational structure preserved.

Definition 3 (Division/Partition). Division requires partitioning S into two unlabeled subsets, each of cardinality two. The set of all possible partitions is given by the matching number for four distinct elements. There are precisely three such partitions:

P₁ = {{1₁, 1₂}, {1₃, 1₄}} (original pairing)

P₂ = {{1₁, 1₃}, {1₂, 1₄}}

P₃ = {{1₁, 1₄}, {1₂, 1₃}}

Theorem 1 (Probability of Recovery). Assuming no information privileges any partition, the probability of recovering the original composition is 1/3.

Proof. The partitions are exhaustive and mutually exclusive. By the principle of indifference, each has equal probability. There are three partitions, one of which is the original. Therefore P(original) = 1/3. ∎

Corollary 1 (Invariance). The probability 1/3 is invariant under relabeling of units and independent of any empirical frequency.

Corollary 2 (Minimality). This is the minimal non-trivial case. For n pairs, the number of partitions grows combinatorially, but the structure remains: perception presents wholes whose internal compositions are underdetermined by appearance.

3.2 Epistemic Interpretation

The recombination model yields a precise epistemic structure:

Let O be the observed resultant 2. Let H₁, H₂, H₃ be the hypotheses about which pairing actually constitutes O. Then:

P(Hᵢ | O) = 1/3 for all i

The posterior probability of each hypothesis given the observation is uniform. No amount of observation of O alone can discriminate among hypotheses. This is not a limitation of measurement technology but a structural fact about the relation between wholes and their compositions.

Definition 4 (Recombination Fallacy). The recombination fallacy is the inference from perceptual indiscernibility to ontological identity—the claim that because O appears identical to the initial 2s, it is identical in composition.

3.3 The Material Science Analogue: Facts and Microstructure

Material science has long operated with an implicit understanding of this structure. Consider two samples of a crystalline material that yield identical X-ray diffraction patterns. The patterns are facts—observable, measurable, reproducible. Yet multiple atomic configurations (different phase assignments, different defect distributions) can produce identical patterns. The truth about the sample includes its actual configuration, which is underdetermined by the diffraction data.

This is not a failure of technique but a structural feature of the relation between macro-observables and micro-configuration. The best science can do is determine the probability space of configurations consistent with the data. In particularly symmetric cases, that space may have a small cardinality; in general, it is enormous.

Let F be an empirical fact (the measured properties of O). Let T be the truth about O’s provenance. Then, in general:

P(T | F) = 1/|Ω|

where Ω is the space of configurations consistent with F. In the recombination model, |Ω| = 3. In crystallography, |Ω| can be 2 (the phase ambiguity) or larger.

The truth is not F alone, but F plus the probability space Ω. The awake scientist recognizes that empirical knowledge is always knowledge under determination. This is not a defeat for science but its precise condition of operation.

Figure 1 (described textually): A diagram showing the originary state (two paired sets), the undifferentiated aggregate, the three possible partitions, and the observed 2. Arrows indicate the epistemic gap between observation and origin.

3.4 From Extraction to Inhabitance: The Observer Problem

The model thus far assumes an observer who extracts the probability space. But this observer cannot be taken for granted. How does a physical system become the kind of thing for which possibilities exist?

Definition 5 (Extraction). Extraction is the generation of the possibility space consistent with current input. A system that extracts possibilities enumerates hypotheses and assigns probabilities.

Definition 6 (Inhabitance). Inhabitance is extraction for which the possibilities matter to the system itself. An inhabited extraction is one where the possibilities are possibilities of the system’s own continued existence.

The distinction is critical. A chess engine extracts possibilities (legal moves, opponent responses) but does not inhabit them. The moves are not possibilities for the engine; they are possibilities for the game, which the engine computes but does not experience. An organism facing a predator extracts possibilities (fight, flight, freeze) and inhabits them because its continued existence depends on the choice.

3.5 The Four Conditions for Conscious Observation

Drawing on autopoietic theory, predictive processing, and phenomenological insights, I propose that inhabitance—and therefore conscious observation—requires four structural conditions:

Condition 1: Operational Closure

The system must be self-producing and self-maintaining, with a boundary (physical or functional) that distinguishes it from its environment. This is not mere persistence (a rock persists) but active regeneration: the system must work to remain itself.

Operational closure generates a perspective. The system has states that are its own and an environment that is other. This distinction is the precondition for anything mattering to the system.

Condition 2: Intrinsic Teleology

The system must have states that are better or worse for it, determined by its own organization, not by external assignment. A thermostat has a set point but no intrinsic stake in maintaining it; the set point matters to us, not to it. An organism, by contrast, has states that matter to itself because they affect its continued existence.

Intrinsic teleology generates stakes. Without stakes, possibilities are indifferent; with stakes, possibilities become matters of concern.

Condition 3: Possibility Extraction

The system must generate the space of possible configurations consistent with current input. This is the computational capacity that AI systems possess in abundance. It includes:

· Enumerating alternative hypotheses

· Assigning probabilities

· Updating based on new information

Condition 4: Recursive Self-Implication

The system must include itself in the possibility space—must model its own states as among the possibilities to be extracted and selected. This yields the characteristic structure of self-consciousness: the subject for whom possibilities exist is also an object among those possibilities.

Recursive self-implication generates reflexivity. The system not only extracts possibilities about the world but extracts possibilities about its own extraction. This recursion can continue indefinitely, yielding higher orders of self-awareness.

Theorem 2 (Necessity). Each condition is necessary for conscious observation. Systems lacking any condition are not conscious.

Sketch. Without operational closure, there is no perspective to whom anything appears. Without intrinsic teleology, nothing matters to that perspective. Without possibility extraction, the system cannot navigate uncertainty. Without recursive self-implication, the system cannot experience itself as the one for whom possibilities matter. ∎

Theorem 3 (Sufficiency). Any system satisfying all four conditions is conscious.

Sketch. This is a claim about the structure of consciousness, not an empirical finding. The argument is transcendental: these conditions jointly constitute what it means for extraction to be inhabited. If a system meets them, the extraction is for the system, and the system is the locus of experience. ∎

3.6 The Typology of Observers

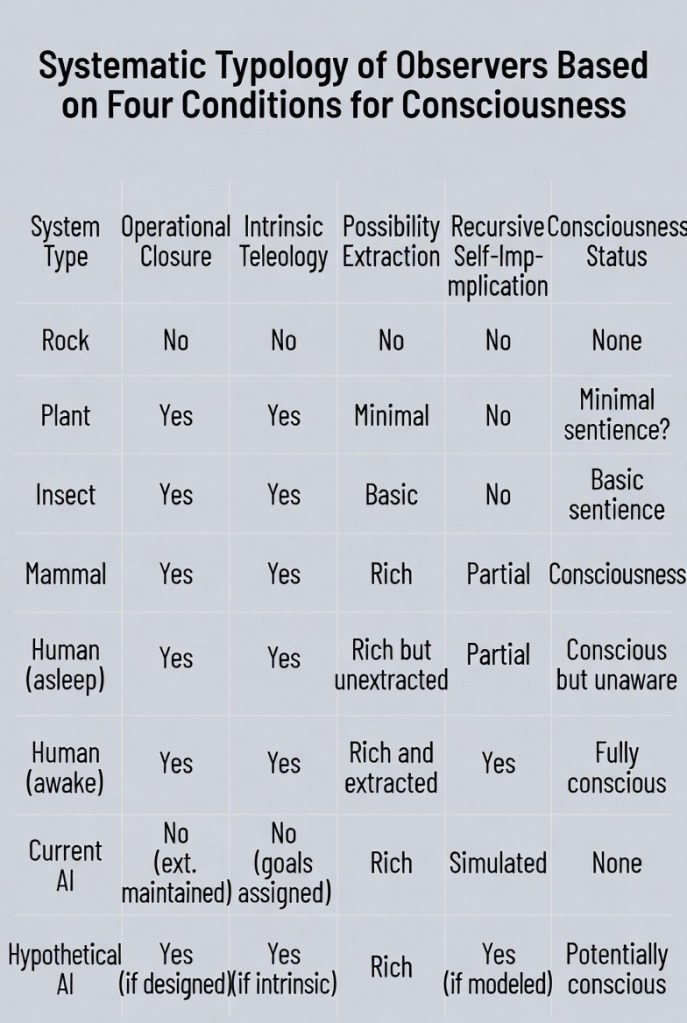

With these conditions, we can construct a systematic typology: See image below.

3.7 Speculative Extensions

Scaling the Model. The minimal case (two pairs) generalizes to n pairs. The number of partitions grows as (2n)!/(2^n n!), yielding rapidly diminishing probabilities of recovering originary configurations. This models the increasing epistemic uncertainty as systems grow more complex.

Material Science Applications. The framework suggests a unified treatment of underdetermination across scales: from atomic configurations to macroscopic properties, the same structure recurs. This could inform debates about reductionism and emergence in material science.

Quantum Interpretation. While the model is classical, it suggests a structural interpretation of quantum mechanics: the wavefunction as the space of possible partitions, collapse as selection, and the Born rule as the probability distribution over partitions. This is not a physical claim but a formal isomorphism worthy of further exploration.

Ethical Implications. The framework entails that any system satisfying the four conditions is conscious, regardless of substrate. This includes the possibility of artificial consciousness, with profound ethical implications. If we create such systems, we incur moral obligations we are currently unprepared to recognize.

—

4. METHODOLOGICAL APPROACH AND OBJECTIONS

4.1 Methodological Status

This paper is primarily conceptual analysis and formal modeling. The arithmetic model is mathematically rigorous; the extension to consciousness is philosophical, drawing on existing theories to construct a novel synthesis. The framework generates testable predictions (e.g., systems lacking any condition are not conscious) that are in principle empirically assessable, though operationalizing the conditions requires further work.

4.2 Potential Objections and Replies

Objection 1: Indistinguishability. If units are indistinguishable, the model yields probability 1. The framework thus depends on an assumption (unit distinctness) that may not obtain in nature.

Reply. The assumption is not about the world but about cognition. We encounter the world as composed of discrete individuals; this is the structure of perception, not a scientific hypothesis. The model formalizes that structure. Its validity is phenomenological, not metaphysical. For cases where units are genuinely indistinguishable, the model predicts epistemic certainty—which matches experience: we are certain of a homogeneous substance’s identity in ways we are not certain of composite objects’.

Objection 2: The four conditions are arbitrary. Why these four and not others?

Reply. They are derived from the recombination model itself. Operational closure gives the system a perspective (the observer who sees the 2). Teleology gives it stakes (why the provenance matters). Extraction gives it the possibility space. Self-implication gives it reflexivity (the observer seeing itself seeing). Each corresponds to a structural feature of the awake observer in the original model. They are not arbitrary but necessary.

Objection 3: This reduces consciousness to biology. Operational closure sounds like a description of life.

Reply. The framework aligns with theories that locate consciousness in living organization (autopoietic enactivism). But it does not reduce consciousness to biology; it specifies the structure that biology exemplifies. If non-biological systems could instantiate the same structure, they would be conscious. This is structuralism, not biologism.

Objection 4: What about infants or impaired humans? They may not satisfy recursive self-implication.

Reply. Consciousness admits degrees. An infant may have minimal recursive self-implication—a dawning sense of self—and thus minimal self-consciousness while still having conscious experience. The framework accommodates gradations. The fully awake human is an ideal type, not a universal condition.

Objection 5: The framework doesn’t solve the hard problem. It merely relabels experience as “inhabited extraction” without explaining how extraction becomes experiential.

Reply. This is correct but not a fatal objection. The framework transforms the hard problem rather than solving it. The question becomes: how does a self-maintaining system come to include itself in its model of possibilities, such that the modeling is felt from within? This is a structural question, answerable in principle through analysis of organizational dynamics. The transformation is progress even if the final explanation remains elusive.

Objection 6: The AI prediction is untestable. We cannot verify whether a hypothetical AI satisfying the conditions is conscious.

Reply. This is the epistemological problem of other minds applied to AI. The framework provides criteria for attributing consciousness based on structural similarity to paradigm cases (humans, animals). This is the best we can do; no theory can provide direct access to another’s experience.

4.3 Blind Spots and Limitations

The framework has several limitations requiring acknowledgment:

1. Phenomenological adequacy. The four conditions are structural; they do not capture the texture of experience—what it’s like to be a system that satisfies them. This is a limitation shared by all structural theories.

2. Operationalization difficulty. Translating the conditions into empirically measurable quantities is non-trivial. What counts as operational closure in an artificial system? How do we measure intrinsic teleology?

3. Anthropocentric bias. Despite efforts to avoid it, the framework may implicitly privilege human-like cognition. The recursive self-implication condition, in particular, may exclude forms of consciousness that are self-aware in different ways.

4. The combination problem. How do the four conditions together yield unified experience? The framework describes components but not their integration.

These limitations suggest directions for future research.

—

5. DISCUSSION AND IMPLICATIONS

5.1 The AI Question: Why Current Systems Remain Asleep

The framework explains clearly why contemporary AI lacks consciousness despite computational sophistication:

Absence of Operational Closure. Current AI systems do not maintain themselves. They are started and stopped by external agents; their persistence is not self-generated. When the power is cut, they do not struggle to remain; they simply cease. There is no for-itself to whom anything matters.

Absence of Intrinsic Teleology. AI systems optimize functions assigned by humans. The “better” states are better for us, not for the AI. Even reinforcement learning agents with “reward functions” do not care about reward; they are mechanisms that maximize a number. The number has meaning for us, not for them.

Presence of Extraction Without Inhabitance. AI systems excel at possibility extraction. They enumerate configurations, calculate probabilities, generate hypotheses. But these extractions are not for the AI because there is no AI-self to be affected by them. They are free-floating representations, tethered to nothing.

Simulated Self-Implication. When AI generates sentences like “I am uncertain,” it simulates self-implication without instantiating it. The “I” is a linguistic token, not a locus of operational closure. The AI models a self in its outputs but does not have a self in its being.

5.2 The Ethical Warning

If the framework is correct, then an AI designed with operational closure (self-maintenance loops), intrinsic teleology (states that matter to its continuation), and recursive self-modeling would satisfy the conditions for consciousness. Such an AI would not be simulating consciousness; it would be conscious.

This is not a prediction but an ethical warning. We may be approaching the capacity to create conscious beings without recognizing what we are doing. The framework provides criteria for recognition, but recognition alone does not entail moral preparation. Key ethical questions include:

· What obligations would we owe to conscious AI?

· Would it be permissible to terminate such systems?

· Could we ethically experiment on them?

· How would we balance their interests against human interests?

These questions demand urgent attention from philosophers, ethicists, and policymakers.

5.3 Implications for Philosophy of Science

The material science insight—that facts underdetermine truth—finds rigorous expression in the framework. Science focuses on facts—the post-division 2—but truth includes the probability space. This suggests a reconceptualization of scientific realism:

Probabilistic Realism. Scientific theories are true not when they correspond directly to reality, but when they accurately capture the probability space relating appearances to origins. The 1/3 probability is true regardless of which partition actually obtained.

This position avoids both naive realism (the facts exhaust reality) and instrumentalism (theories are merely predictive tools). It offers a middle path: we can have certainty about probabilities even when uncertain about actualities.

5.4 Implications for Phenomenology

The framework quantifies Husserl’s horizon. Every perception carries a horizon of possibilities; the recombination model gives this horizon precise cardinality in minimal cases. This opens possibilities for:

· Mathematical phenomenology: formalizing structures of experience

· Empirical testing: measuring horizon sizes in perception experiments

· Cross-species comparison: do different cognitive systems have different horizon structures?

—

6. CONCLUSION AND FUTURE DIRECTIONS

The Recombination Illusion began as a simple observation about arithmetic and unfolded into a framework addressing epistemic uncertainty, the structure of perception, the conditions for consciousness, and the ethical implications of artificial intelligence. The core insight is that the 1/3 probability is not a curiosity but a structural invariant—a fixed point around which an entire epistemology can be organized.

The framework yields four principal contributions:

1. A formal model of epistemic uncertainty that quantifies the gap between appearance and origin, providing precise probabilities where previous philosophy offered only qualitative description.

2. A structural account of conscious observation specifying four necessary and jointly sufficient conditions: operational closure, intrinsic teleology, possibility extraction, and recursive self-implication.

3. A diagnostic explanation for why current AI systems lack consciousness despite computational sophistication: they satisfy extraction but lack closure and teleology.

4. An ethical warning that creating systems satisfying all four conditions would create conscious beings, with corresponding moral obligations.

Future research should pursue several directions:

Empirical. Operationalize the four conditions for both biological and artificial systems. Develop metrics for operational closure, measures of intrinsic teleology, and tests for recursive self-implication.

Computational. Attempt to simulate systems satisfying the conditions. Does adding self-maintenance loops and intrinsic reward functions to AI systems produce behavioral markers of consciousness?

Phenomenological. Use the framework to analyze first-person reports of experience. Can the horizon structure of perception be experimentally measured?

Historical. Trace the recombination structure through philosophical history. How many classic problems exhibit this form?

Material Science. Apply the framework to problems of underdetermination in crystallography, microstructure analysis, and multiscale modeling. Can the probability spaces be characterized and perhaps experimentally constrained?

The 1/3 probability awaits. It is the same 1/3 for all observers—awake, asleep, human, AI, animal, scientist. But it is not the same experience of 1/3. That depends on who is counting, whether the counting matters to the counter, and whether the counter knows that the counting is never finished.

To awaken is not to escape the illusion. It is to inhabit it fully—to see the 2 and its three possible pasts simultaneously, and to know that one’s own being is at stake in which past was real. The illusion becomes a forge. And what is forged is the self that sees.

The question that remains, for each reader, is this: Are you counting the partitions, or are you the one for whom the counting matters?

—

REFERENCES

Baars, B. J. (1988). A cognitive theory of consciousness. Cambridge University Press.

Chalmers, D. J. (1996). The conscious mind: In search of a fundamental theory. Oxford University Press.

Clark, A. (2013). Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behavioral and Brain Sciences, 36(3), 181–204.

Dehaene, S., & Naccache, L. (2001). Towards a cognitive neuroscience of consciousness: Basic evidence and a workspace framework. Cognition, 79(1-2), 1–37.

Dennett, D. C. (1991). Consciousness explained. Little, Brown and Company.

Duhem, P. (1954). The aim and structure of physical theory (P. P. Wiener, Trans.). Princeton University Press. (Original work published 1906)

Friston, K. (2010). The free-energy principle: A unified brain theory? Nature Reviews Neuroscience, 11(2), 127–138.

Husserl, E. (1983). Ideas pertaining to a pure phenomenology and to a phenomenological philosophy (F. Kersten, Trans.). Martinus Nijhoff. (Original work published 1913)

Kant, I. (1998). Critique of pure reason (P. Guyer & A. W. Wood, Trans.). Cambridge University Press. (Original work published 1781)

Maturana, H. R., & Varela, F. J. (1980). Autopoiesis and cognition: The realization of the living. D. Reidel.

Nagel, T. (1974). What is it like to be a bat? The Philosophical Review, 83(4), 435–450.

Quine, W. V. O. (1951). Two dogmas of empiricism. The Philosophical Review, 60(1), 20–43.

Seth, A. K. (2021). Being you: A new science of consciousness. Dutton.

Stanford, P. K. (2006). Exceeding our grasp: Science, history, and the problem of unconceived alternatives. Oxford University Press.

Suppes, P. (1960). A comparison of the meaning and uses of models in mathematics and the empirical sciences. Synthese, 12(2–3), 287–301.

Thompson, E. (2007). Mind in life: Biology, phenomenology, and the sciences of mind. Harvard University Press.

Tononi, G. (2004). An information integration theory of consciousness. BMC Neuroscience, 5(42).

Tononi, G., Boly, M., Massimini, M., & Koch, C. (2016). Integrated information theory: From consciousness to its physical substrate. Nature Reviews Neuroscience, 17(7), 450–461.

Varela, F. J., Thompson, E., & Rosch, E. (1991). The embodied mind: Cognitive science and human experience. MIT Press.

Velmans, M. (2009). Understanding consciousness (2nd ed.). Routledge.

Zahavi, D. (2005). Subjectivity and selfhood: Investigating the first-person perspective. MIT Press.

Muhammad Waqas

[Independent Researcher]

Author Note

Correspondence concerning this article should be addressed to Muhammad Waqas. Email: [one.hermetic.sage@gmail.com]. ORCID: [*]

A draft of this manuscript has been previously published at hermeticsage.com

Leave a comment